Policy Prototyping: een beoordeling van de artikelen 5 en 6 van de Europese AI-verordening

21.10.2022

Dit artikel is enkel in het Engels beschikbaar

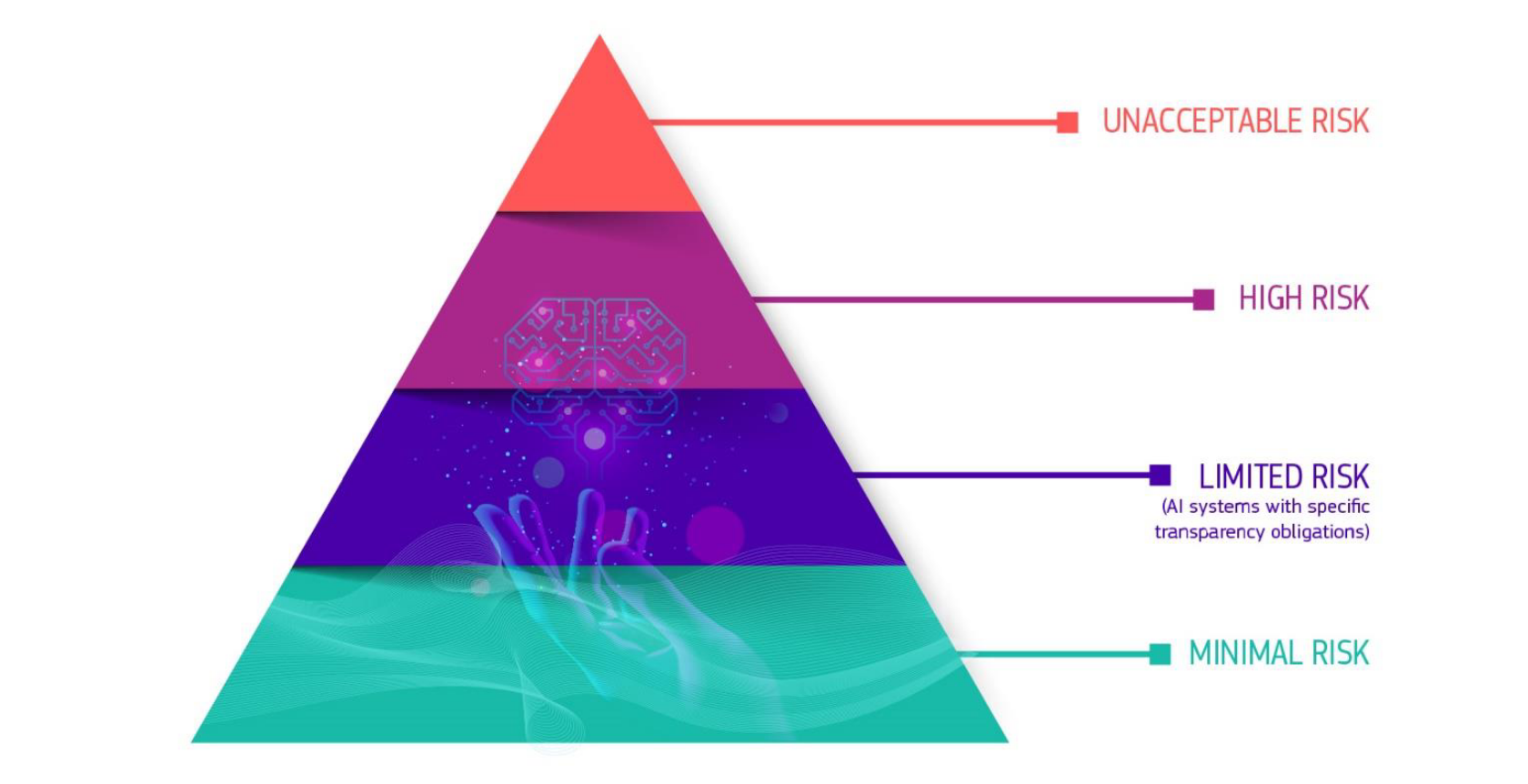

In April 2021, the European Commission published its proposal for an EU AI Act. This policy proposal will likely have a significant impact, but there is still a long road ahead before the Act will be finalized and enter into force. In the meantime, the Knowledge Centre Data & Society undertook a policy prototyping exercise with the first draft. More specifically, we examined the definition of AI as well as articles 5 and 6 of the AI Act (respectively dealing with prohibited and high-risk AI applications) by asking stakeholders to provide feedback.

Main conclusions:

Participants whose AI application falls under the high-risk or prohibited categories of the AI Act often disagree with that assessment

The definitions and descriptions in Article 6 (high-risk uses) are unclear and create uncertainty

Few to no problems with the description and definition of Article 5, prohibited uses

Policy prototying?

Policy prototyping (PP) refers to an innovative way of policy making, similar to product or beta testing. It involves a phase in which envisaged rules are tested in practice before being finalized and promulgated. By conducting a policy prototyping exercise, the Knowledge Centre aimed to better assess the understandability and usability of articles 5 and 6 of the AI Act. It did so by consulting different Flemish stakeholders in that regard.

To assess those two articles, we created two surveys for providers and developers of AI applications. The first survey was designed as a checklist to help evaluate whether or not an AI application would fall under the scope of the two articles (i.e. prohibited or high-risk category). The second survey focused on the understandability and usability of the concepts used in the articles.

Outcome of our exercise

The first striking finding is that the majority of the respondents indicated that the definition of AI, as proposed by the AI Act, is clear. This is surprising considering the ongoing policy debate and the input we received from stakeholders during discussions outside of this exercise. Additionally, the majority also indicated that the definitions of AI techniques in Annex I of the AI Act are clear. This is rather interesting, however, considering that some very broad terms were included in this list (e.g. statistical approaches or search and optimisation methods). Additionally, no respondent seemed to have an issue with the alleged lack of ‘technological neutrality’ of this list (i.e. referring to only currently known AI approaches).

The second noteworthy finding following the survey results is the high number of AI applications that fall within the scope of the high-risk category. More specifically, 58 percent of survey participants indicated that their AI system is considered high risk under the AI Act, which is a significant proportion. However, 55 percent of this group disagrees with respect to the risk level attributed to their applications.

Among participants whose AI application does not belong to the prohibited or high-risk category, there is less disagreement. 63 percent agree with the result, only a small percentage of participants think their AI applications should be under a stricter regime.

Categories from Article 6 (high-risk uses) still raise many questions

Participants were also invited to provide feedback on the definitions and concepts used in articles 5 and 6 of the draft AI Act. More specifically, they were asked to assess the understandability and clarity of the various concepts used. Below, we present some insights from the survey.

Participants were asked to review the definition of the high-risk category. There are two types of high-risk categories. Either an AI system is a product or a safety component of a product, covered by certain Union harmonisation legislation (listed in Annex II, section A of the AI Act) and such product is required to undergo a third-party conformity assessment. Or, the AI system is applied in a certain sector with a specific purpose (as listed in Annex III).

Regarding the first type of high-risk AI systems, participants found that the harmonisation legislation listed in Annex II is in many cases not specific enough.

Concerning the second type of high-risk AI systems, we assessed the description of the different sectors and purposes. Regarding, 'biometric identification and categorisation of natural persons', the description of the purpose (i.e. AI systems intended to be used for 'real-time' and 'post' remote biometric identification of natural persons) still raises many questions.

For example, the use of the term ‘remote’ creates uncertainty. It is not clear to participants whether this is about physical distance and what this means for fingerprint-based identification. The description of the sector also leads to confusion. Namely, it points to both 'identification' and 'categorisation', while the latter term is not contained in the actual purpose description. Indeed, there is no mention of assigning a person to a category in the purpose description.

Concerns also emerge regarding the sector of 'Management and operation of critical infrastructure' where the specific use of AI systems as safety components in the management and operation of road traffic and the supply of water, gas, heating and electricity, is considered to be high risk.

The text defines safety component as follows: a component of a product or of a system which fulfils a safety function for that product or system or the failure or malfunctioning of which endangers the health and safety of persons or property.

For some participants, the term 'safety components' is too general and not specific enough. Participants suggested including concrete examples in the preamble or recitals. The definition is also broader than what the term suggests. Many parts of a product or system can cause health damage or safety risks if they fail or malfunction, without the term 'safety component' being associated with them. One of the participants gives the following example: ”e.g. pressure relief valve of a high-pressure cooking pot is a safety component. But the lid shouldn’t be categorised as such. However, a sudden crack in the lid (system component) can lead to health risks in case of failure. This makes the lid also a safety component.”

The definition of a safety component is also incomplete, according to some. In addition to people and property, the scope of the potential danger should also include animals or even flora.

The fifth sector of high-risk applications concerns "Access to and enjoyment of essential private and public services and benefits". The second specific purpose under that sector refers to “AI systems intended to be used to evaluate the creditworthiness of natural persons or establish their credit score, with the exception of AI systems put into service by small-scale providers for their own use”

According to several participants, the reference to “AI systems put into service by small-scale providers for their own use” creates ambiguity and is a source of unclarity. Who are small-scale providers? SMEs? When is something small-scale? etc.

The term 'own use' was also considered too vague by some participants. Finally, the question was asked why there should be any difference at all between large and small users in terms of their own use.

Conclusions and way forward

The first policy prototyping exercise (or rather experiment) led to some interesting findings, but the importance of those findings should be nuanced. Firstly, only a limited number of stakeholders provided feedback which impacts the representativity of the findings. Secondly, our survey approach did not result in findings or results that enabled us to come up with improved wording of the AI Act. This should, however, be the goal of policy prototyping: not only test, but also improve the proposed policy. Therefore, we will adopt a new policy prototyping approach in the future with increased stakeholder involvement and more interaction.

Auteurs:

Frederic Heymans

Thomas Gils